Get in touch

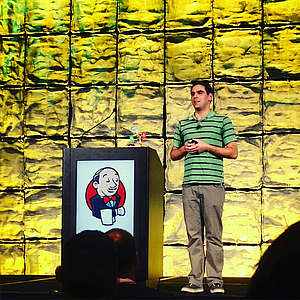

Tomas Norre Mikkelsen and I recently had the opportunity to attend the Jenkins User Conference U.S. West in Santa Clara, California.

The conference on the U.S. West Coast is the largest such event in the world for users and developers of the continuous integration system Jenkins, which represents a core element of software development at AOE. This made the event particularly valuable and it was exciting to gain insights into future developments of the project. In addition, we learned how Jenkins was used in large corporations such as Google or Yahoo!

Following, I would like to present some of the concepts and methodologies, which ran like a thread through the numerous presentations.

Probably the most commonly discussed scenarios devoted themselves to the interaction between Jenkins and the virtualization platform Docker. Key issue here was creating containers with the help of Jenkins as part of a continuous delivery pipeline. Additionally, the execution of Jenkins itself within a container is becoming increasingly important, as this is one prerequisite for simple scaling as well as operation in the cloud – in several presentations the speakers talked about clusters with several hundred Jenkins slave instances.

It should not go unmentioned at this point that Jenkins was also a central theme of this year‘s international DockerCon conference.

From a single, individually managed Jenkins instance (pets) to cluster management (cattle): The automation of all processes involved represents a huge challenge within the context of large, auto-scaling Jenkins installations. The foundation is the concept “Everything as Code”, the programmatic description of, among others, infrastructure, configuration and build process. For example, the Jenkins Workflow Plugin was presented in this context, as was the Jenkins-independent Blazemeter Taurus for the simplified creation of performance tests based on Apache JMeter.

Jenkins is often used not only for automating builds, but also for test automation. In both cases it is crucial to provide all stakeholders with rapid feedback on the impact changes and new features have on the performance and stability of the overall product. Here, among other things, the continuous gathering of metrics at various points of the build process is considered crucial in order to automatically differentiate “good” from “bad” builds. These can be discarded or promoted accordingly and run through the further delivery pipeline to the production system.

Finally, I would like to highlight the talk of Fabrizio Branca. Not only was he, as usual, able to provide us with his well-founded insights into the development- and continuous delivery workflows at AOE, he also evoked numerous positive responses from the participants for his presentation of creative in-house developments in the field of “Internet of Things”.

The conference was successful and exciting, due in no small part to the venue in the heart of Silicon Valley and the numerous participants and speakers from local companies. I enjoyed it tremendously and am looking forward to implementing and using my newly gained knowledge in the course of my daily work.